Control groups are at the heart of mobile A/B testing, yet their importance is often understated. Failure to use control groups correctly could cost you revenue. So, it’s well worth thinking carefully about how control groups could be used for your testing processes, and whether you could be missing opportunities by overlooking control groups.

When you set up an A/B test, usually you’re trying to answer a question: “should I do A or should I do B?”

Let’s say you want to increase user retention by sending a push notification to people who have been dormant for a few days. You’re considering personalizing the message by reminding the person of how long it’s been since they signed in. Your hypothesis is that personalizing will increase open rates, but you want to test it against the less-personalized message — to confirm that your theory will have the intended effect.

But there’s always a third option. The question isn’t “should I do A or B?” — it’s “should I do A, B, or nothing at all?”

That’s where control groups come in. Doing nothing is a decision in itself — and sometimes it’s the right decision. The only way to be sure that your changes aren’t doing more harm than good is to test everything against a control group. Let’s go over a few examples to see how control groups can be used for more accurate A/B testing.

Control Groups in an A/B Test

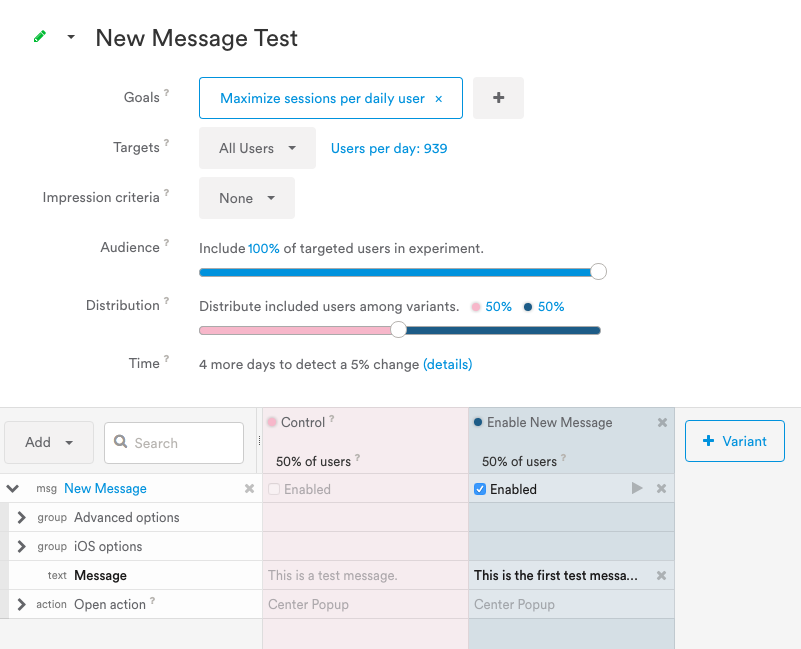

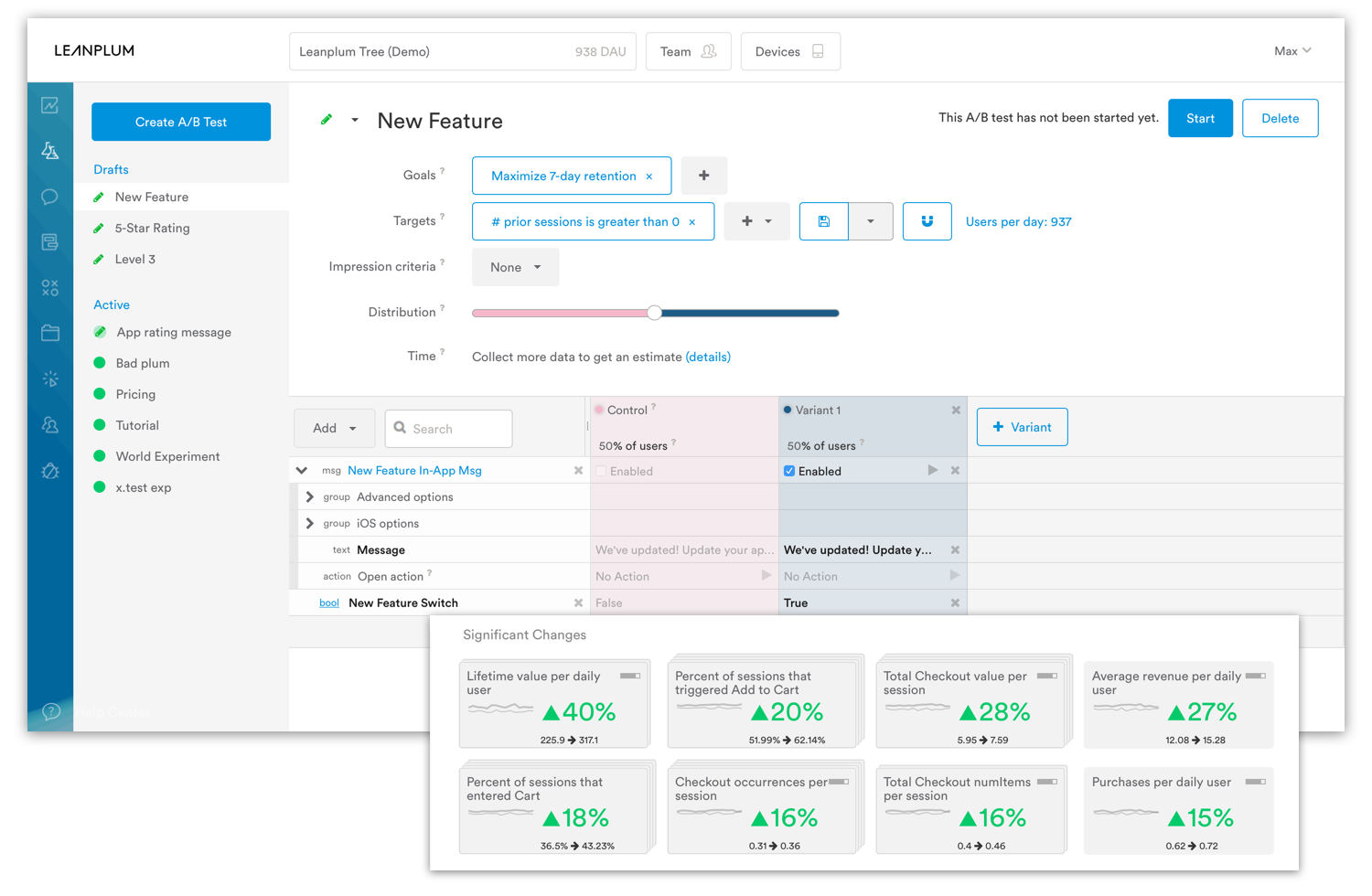

To begin, let’s take a look at Leanplum’s A/B testing screen.

A control group and a variant in Leanplum’s A/B testing dashboard.

As you can see, the first group is called “Control” by default, and its variables are grayed out. You can tweak the first variant as much as you’d like, but the control group’s variables are pre-set based on your app’s code. It represents the current version of your app.

Now, if you’re in a rush to send a message and you want to test two versions of it, it’s tempting to work around this restriction. You could always adjust your app variables so that your control group represents one version of the proposed message, and your first variant represents the other version. But the results of that test would be unreliable.

It might be slower, but control groups are the only way to guarantee that you’re not doing more harm than good. For an A/B test, that means you can only test one potential change at a time because the A will be the control group. So we strongly recommend keeping that control group in place for the duration of your test.

Control Groups in a Multivariate Test

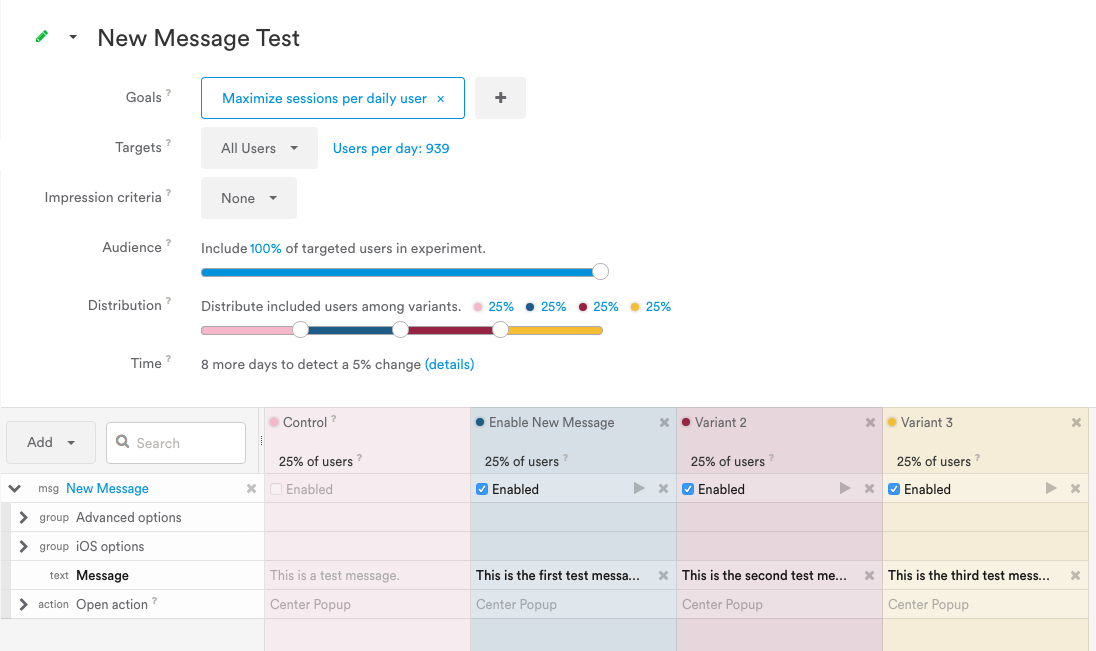

What about multivariate tests? If you’re looking to cover more ground with each test, multivariate tests (MVTs) isn’t a bad option. Here’s what a MVT would look like in Leanplum:

In addition to the control group, which in this example won’t receive a message, we’re now testing three different versions of the same message.

This can feel like a best-of-both-world scenario. You’re maintaining your data’s accuracy by including a control group, but you’re also working quickly by testing several variants at once.

Theoretically, multivariate tests allow for faster iterations. But note the difference under the “Time” heading. While this MVT is expected to take eight days to reach statistical significance, the A/B test up above is only expected to take four days! What does this mean?

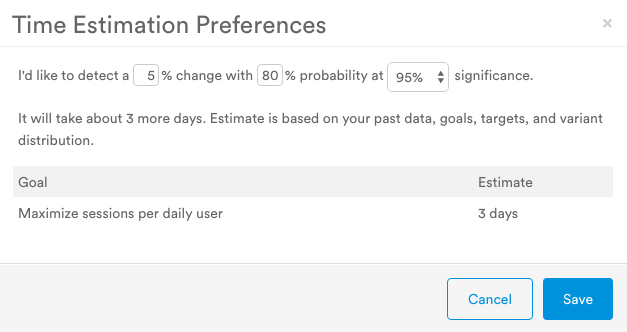

An experiment isn’t deemed complete until it identifies a given degree of change — say, five percent — with a given degree of certainty. A smaller change requires a smaller margin of error to detect, so the test will take longer to complete.

The longer the experiment runs, the more samples it collects. To pick an extreme example, what would happen if our push notifications were only sent to four users?

If two recipients clicked on variant A while only one recipient clicked on variant B, the results would show that the first version had a 2x higher open rate.

Of course, this statistic is misleading — the sample size is so small that the difference in open rates may have been due to random chance. We would need to collect more samples before comparing the two open rates.

Leanplum’s time estimator calculates roughly how long an A/B test will take to complete.

Furthermore, the degree of change affects the completion time.

If a metric grows from 20 percent to 25 percent, both the control and the variant are allowed just under a 2.5 percent margin of error. This means that even if the actual results were off by a couple of percentage points, the two ranges would not overlap, so the difference was not solely the result of random chance.

Whereas a change from 20 percent to 21 percent would demand a less than 0.5 percent margin of error, so more samples would be required.

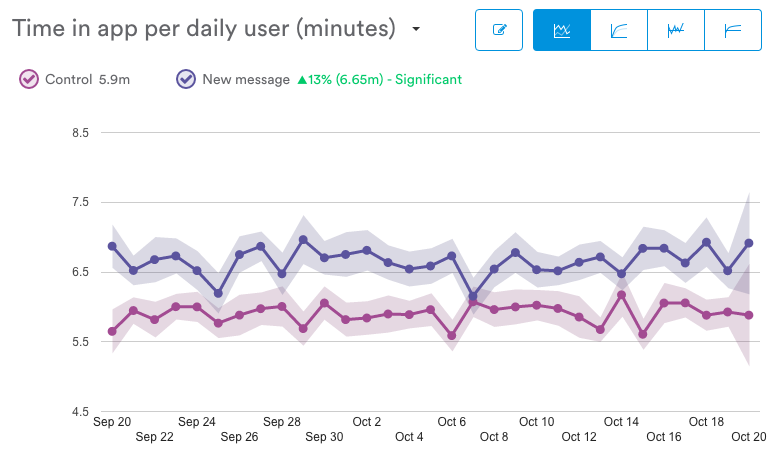

In the following example from the Leanplum dashboard, the margin of error is represented by the shaded area around each line. Since the ranges rarely touch, we can conclude that, on average, the “New Message” variant offers a statistically significant improvement over the control group.

The more variants you add to an experiment, the longer it will take to complete. There’s a time and a place for multivariate tests, but it is incorrect to assume that fitting more variants into a single test is inherently faster than testing one variant at a time.

For tests on small segments, you might not have enough users to feasibly perform a MVT. In these situations, an A/B test will provide quicker and more stable results.

The Only Times Control Groups Are a Bad Idea

Control groups are a bad idea when the content is urgent. Statistical accuracy is important, but what if there’s an important app or account update that users need to know about? Using a control group means that some users won’t receive this urgent update until later.

In situations where you have to send a message, such as a term of service update, the third option of not doing anything isn’t really an option. Therefore, there’s no use in testing message copy against a control group — you’ll have to send the message anyway, even if the control group wins. These are the only cases in which you’d be advised not to use a control group.

Overview of the A/B test interface.

Overview of the A/B test interface.

Learn more about using control groups

The only thing worse than not A/B testing at all is drawing the wrong conclusions from your tests. With this information in mind, you can skip the beginner’s mistake of ignoring control groups.

Of course, there’s more to A/B testing and multivariate testing than control groups. For a more holistic view, you can read up on Leanplum’s mobile A/B testing feature, or contact us for a full demo.

—

Leanplum is the most complete mobile marketing platform, designed for intelligent action. Our integrated solution delivers meaningful engagement across messaging and the in-app experience. We work with top brands such as Expedia, Tesco, and Lyft. To find out more about how Leanplum works, schedule your personalized demo here.

Overview of the A/B test interface.

Overview of the A/B test interface.